AI Is Probabilistic. Responsibility Isn’t.

The more I use AI, the more often I fight with it.

“Yes, that’s exactly what I asked for.” “No, that’s not what I meant.” “That’s not precise enough.” “Why are you giving me this?”

And recently I caught myself thinking: Are my expectations wrong? Is AI getting less capable? Am I prompting worse over time? Am I not adapting fast enough to how these systems evolve?

Why does it sometimes feel like I’m getting slower instead of faster? Like I have to correct more. Verify more. Rewrite more myself again.

Instead of feeling amplified, I feel responsible.

And that’s where it becomes interesting.

For now — and for most of us using AI in daily work — these systems generate answers by recombining patterns learned from existing human-created data and predefined objectives.

They don’t pull ideas out of thin air. They don’t access a moral compass. They don’t wake up with intuition.

They predict.

They calculate probability.

They optimize toward goals that humans have defined.

Even the most advanced models operate within architectures, guardrails, and incentives designed by people. They can retrieve new information, they can simulate, they can surprise us with combinations — but they are still bounded by what has been modeled, trained, and incentivized.

So when I expect something that doesn’t exist yet — a fundamentally new worldview, ethical judgment without guidance, original thinking beyond the horizon of existing knowledge —what exactly am I asking?

And if the output is wrong, incomplete, biased, or misleading… is the failure inside the machine?

Or somewhere inside the system around it?

And that system includes:

Me — as a user. Me — as a manager. Me — as a leader. Parents guiding children. Organizations designing workflows. Developers building architectures. Investors setting incentives. Regulators defining boundaries.

AI is probabilistic. Responsibility isn’t.

1. The Expectation Gap

I’ve started to notice something uncomfortable.

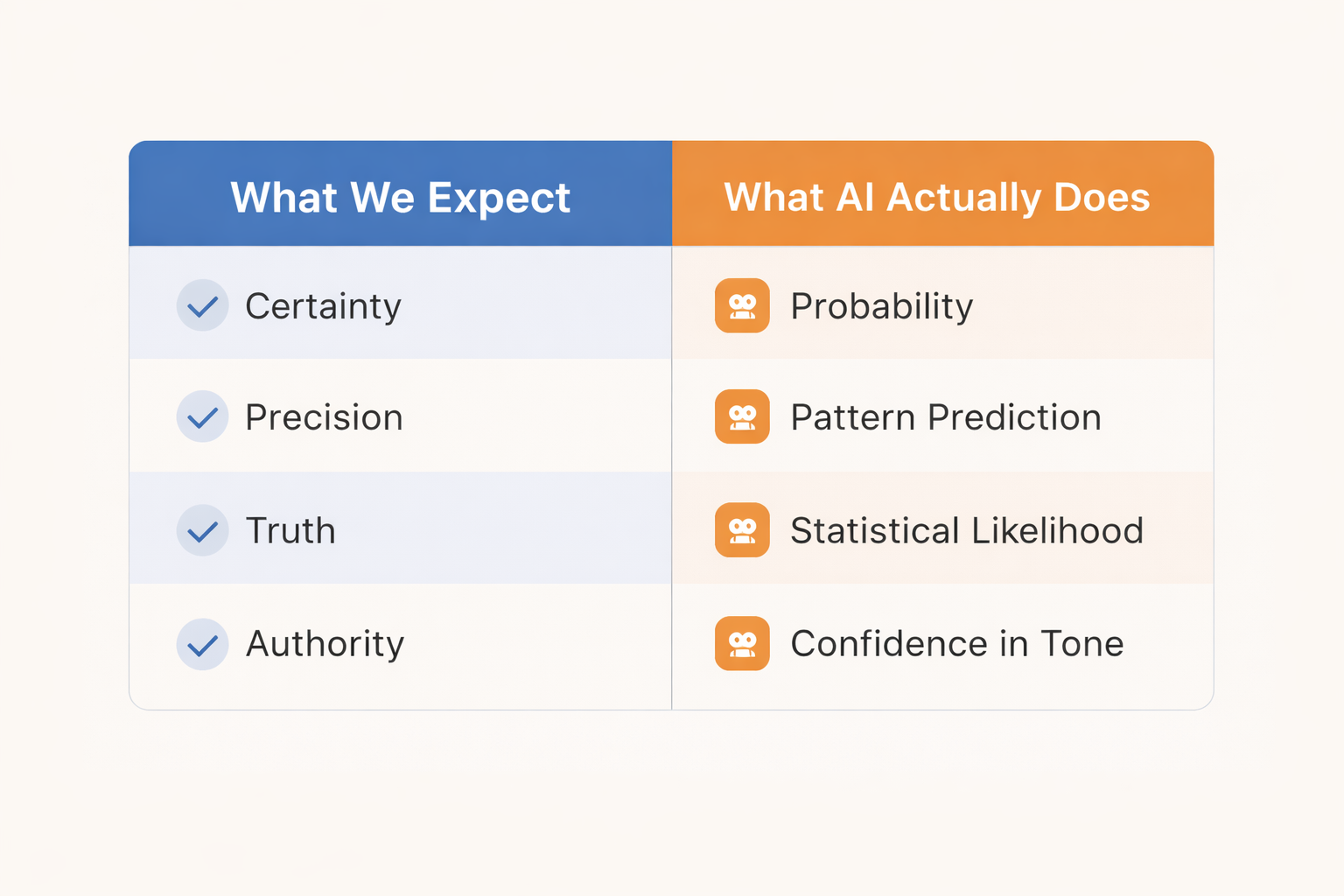

When I explain something to AI, I expect it to think like me. I expect it to follow my logic. My structure. My standards. My way of connecting ideas.

And maybe that’s the first illusion. Because I don’t just expect an answer. I expect my answer — only faster.

But AI doesn’t think like me. It doesn’t even think like a specific expert. It predicts based on patterns learned from vast amounts of human-generated data.

And that means something I don’t always like to admit: What it gives me is often not my logic. It’s an average of what most people have asked, written, published, or reinforced. AI doesn’t optimize for my intellectual edge. It optimizes for statistical likelihood.

And I don’t want to be average. So when I feel friction, maybe it’s not because AI is getting worse. Maybe it’s because I’m expecting certainty from a probabilistic system.

There’s another layer to this.

For decades, we’ve learned that machines are precise. If a calculator gives 4, it’s 4. If a machine cuts metal, it cuts exactly where programmed. If a system runs, it runs deterministically. Machines execute.

So subconsciously, we transfer that expectation to AI. Machine = precision.

But generative AI is not deterministic machinery. It is probability wrapped in confident language. And that confidence can be dangerous.

I’ve experienced this very directly.

I build custom GPTs. I define exactly what knowledge they contain. I know what is inside the system.

Then I ask a question. And sometime it confidently tells me something that is not in its knowledge base. So I dig deeper. I ask again. I challenge it. And it constructs a full, coherent, convincing explanation — about something that doesn’t even exist. The tone is structured. The logic seems clean. The language sounds authoritative. But it’s wrong.

And I know it’s wrong — because I know the boundaries of the knowledge.

Now imagine this: I create a custom GPT. I share it with clients. My clients do not know its boundaries. If it answers with confidence — they will assume correctness. If it creates something inaccurate — it doesn’t just generate an error. It creates expectations.

And wrong expectations are not clients or mine happieness...

This is where I see the real expectation gap. We expect precision. We get probability. We hear confidence. We assume truth.

And unless we consciously design responsibility into the system around it — the gap widens.

2. The Efficiency Myth

Every time humanity invents a new tool, we tell ourselves the same story: This will make us more efficient.

And efficiency sounds like freedom.

If I’m faster, I can do more. If I do more, I can earn more. Produce more. Reach more people.

And over the last decade(s), the narrative evolved. If I’m more efficient, I’ll finally have time. Time to exercise. Time to cook properly. Time to read. Time to learn. Time to sit with people I love. Time for myself.

That was the promise. But in reality? We just keep running.

Tools get faster. Output increases. Communication accelerates. And instead of reclaiming time, we compress more into it.

AI is the newest acceleration layer.

It produces content in seconds. Research in minutes. Preparation in one prompt.

But here’s the question: If the output is fast but not verified — is it really efficient?

Is that text truly what I want to send? Is that research strong enough for negotiation preparation? Is that analysis solid enough for a strategic decision? Is that messaging precise enough for a sales conversation?

Or am I confusing speed with quality?

Because what I actually want isn’t faster decisions. I want better ones. Not more content. Content that communicates exactly what I mean. Not quicker preparation. Preparation that increases confidence and clarity.

Efficiency without verification is acceleration without direction.

And acceleration without direction just gets us lost faster.

3. Shared Responsibility

AI is not safe because it has guardrails.

It has guardrails because humans built them. And humans design systems within incentives.

Architectures, safety layers, training data, transparency policies — they don’t emerge in a vacuum. They are shaped by strategic decisions, commercial interests, regulatory pressure, investor expectations, and competitive dynamics.

That doesn’t automatically make them malicious. But it does make them human. And humans are influenced by power, capital, and ambition.

So the idea that AI is “safe” simply because developers implemented guardrails is incomplete. Guardrails reduce risk - but they don't work 100%. And they don’t remove responsibility.

If AI is probabilistic, responsibility is distributed. It sits in multiple layers.

Developers who define architecture, objectives, and constraints. Investors who shape incentives and speed of deployment. Organizations who decide how AI enters workflows. Leaders who set standards for verification and usage. Users who choose whether to question or simply accept. And in another domain entirely: Parents and educators who decide how and when young people are exposed to these systems.

Children don’t need unlimited access. They need guidance. They need boundaries. They need context. Because confidence in tone is not the same as truth.

I don't think AI will disappear. And it shouldn’t. We are not going back.

The question is how we choose to coexist with it.

What kind of professionals do we want to be? What kind of leaders? What kind of parents? What kind of society?

On a professional level, responsibility becomes practical.

It looks like: Clear workflows. Defined review protocols. Risk categorization. AI literacy. Human sign-off in high-stakes decisions.

In my own work, I use AI to structure thinking — not to replace it. To organize complexity. To support partners with clarity. To create systems so that not ten people have to solve the same problem ten times.

Even in negotiation preparation, AI helps structure perspectives — but the final judgment stays human.

AI accelerates structure. It does not own consequence.

Responsibility doesn’t mean fear. It means vision.

If we want to use powerful tools while preserving clarity, trust, and human dignity, then responsibility cannot be outsourced to code.

AI is probabilistic. Responsibility isn’t.

4. The Absurd Standard We Set

There is something slightly absurd in the way we talk about AI.

We demand perfection from a probabilistic system. We expect it to be unbiased, precise, transparent, ethical, creative, reliable, and safe — at scale.

And at the same time, we struggle to hold ourselves to similar standards. We forward articles without reading them fully. We skim reports. We rely on summaries. We react before verifying.

Then AI produces something imperfect — and we are surprised. But what exactly did we expect?

A system trained on human data to behave better than humans?

A model shaped by incentives and capital to operate outside incentives and capital?

A tool designed to predict to suddenly know?

AI reflects us.

Our data. Our patterns. Our blind spots. Our ambitions. Our shortcuts.

AI can - and will - be wrong.

The real question is whether we design our lives, our companies, and our societies in a way that can absorb that fact responsibly.

Performance is ownership, not automation.

Be well

Tina